Hortonworks Sandbox

A Hands-On Example

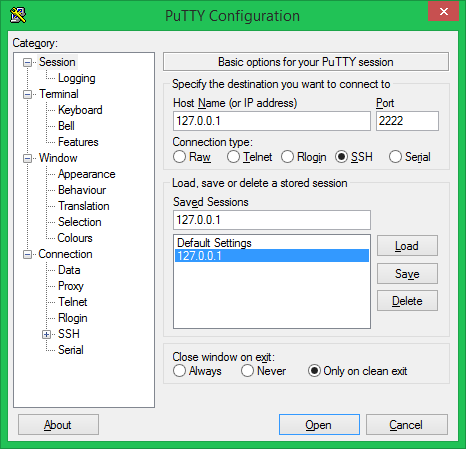

Let’s open a shell to our Sandbox through SSH:

|

1 |

ssh -p 2222 root@127.0.0.1 |

or putty

Then let’s get some data with the command below in your shell prompt:

|

1 |

# wget http://en.wikipedia.org/wiki/Hortonworks |

Copy the data over to HDFS on Sandbox:

|

1 2 |

# hadoop fs -put ~/Hortonworks /user/guest/Hortonworks put: Permission denied: user=root, access=WRITE, inode="/user/guest/Hortonworks._COPYING_":guest:guest:drwxr-xr-x |

ลอง ls ดูดิ

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

# hadoop fs -ls /user Found 11 items drwxrwx--- - ambari-qa hdfs 0 2015-10-27 12:39 /user/ambari-qa drwxr-xr-x - guest guest 0 2015-10-27 12:55 /user/guest drwxr-xr-x - hcat hdfs 0 2015-10-27 12:43 /user/hcat drwx------ - hdfs hdfs 0 2015-10-27 13:22 /user/hdfs drwx------ - hive hdfs 0 2015-10-27 12:43 /user/hive drwxrwxrwx - hue hdfs 0 2015-10-27 12:55 /user/hue drwxrwxr-x - oozie hdfs 0 2015-10-27 12:44 /user/oozie drwxr-xr-x - solr hdfs 0 2015-10-27 12:48 /user/solr drwxrwxr-x - spark hdfs 0 2015-10-27 12:41 /user/spark drwxr-xr-x - unit hdfs 0 2015-10-27 12:46 /user/unit drwxr-xr-x - zeppelin zeppelin 0 2015-10-27 13:19 /user/zeppelin |

login เป็น hdfs แล้วเปลี่ยนสิทธิ์ให้ /user/guest

|

1 2 3 4 |

# su hdfs $ hadoop fs -chmod -R 777 /user/guest $ exit exit |

จากนั้นค่อย put ลงไปใหม่ ก็จะได้ละ

|

1 2 3 4 |

# hadoop fs -put Hortonworks /user/guest/Hortonworks # hadoop fs -ls /user/guest Found 1 items -rw-r--r-- 3 root guest 53218 2015-12-01 08:29 /user/guest/Hortonworks |

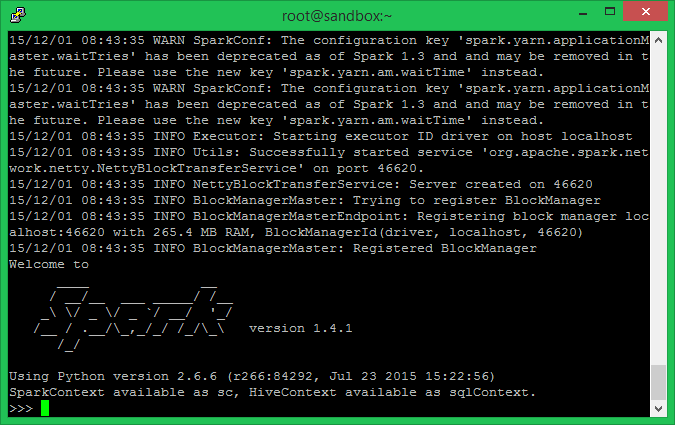

Let’s start the PySpark shell and work through a simple example of counting the lines in a file. The shell allows us to interact with our data using Spark and Python:

|

1 |

pyspark |

As discussed above, the first step is to instantiate the Resilient Distributed Dataset (RDD) using the Spark Context sc with the file Hortonworks on HDFS.

|

1 |

myLines = sc.textFile('hdfs://sandbox.hortonworks.com/user/guest/Hortonworks') |

Now that we have instantiated the RDD, it’s time to apply some transformation operations on the RDD. In this case, I am going to apply a simple transformation operation using a Python lambda expression to filter out all the empty lines.

|

1 |

myLines_filtered = myLines.filter( lambda x: len(x) > 0 ) |

Note that the previous Python statement returned without any output. This lack of output signifies that the transformation operation did not touch the data in any way but has only modified the processing graph.

Let’s make this transformation real, with an Action operation like ‘count()’, which will execute all the transformation actions before and apply this aggregate function.

|

1 |

myLines_filtered.count() |

The final result of this little Spark Job is the number you see at the end.